Predicting Survival in Titanic! Part 2

Using ML/AI to predict chances of survival for each passenger in the ship.

This post is in continuation to a previous article which detailed on getting the data ready for model building for prediction of survival chances of passengers travelling in the Titanic.

The data has been downloaded from Kaggle and the link to the competition is here.

Full .ipynb code files for both Part 1 and 2 can be found on GitHub here.

Recap

In the previous post, we had performed following steps on the dataset

- Feature transformation — to arrive at normal distribution

- Feature Engineering — addition of a few new features from information extracted from existing ones

- Cleaning and Imputation — dropped a few columns and filled some missing information

The final data from the previous post will be used as input henceforth. One thing to note is that the data has both train and test sets combined and we will now be splitting those.

Now this post may seem a bit longer, but try to understand the steps of process and then it is all a cake-walk.

Getting Started — Part 2

Importing the data and taking a look at it.

Observations:

- We have missed dropping the ‘Ticket’ column earlier, hence will be dropped now.

- The target variable ‘Survived’ is in between other features, and hence let us shift it to right most for ease of explanation and better understanding.

- The ‘Pclass’ feature shows as int64 and let us convert it to ‘object’ type for better result from the model

Further transformation of data

The data we have not is cleaned, imputed and transformed. What else possibly can be done now?

As we can see there are many columns with data type as ‘object’ and we need to encode them into numbers before we feed any of it into the model. Pandas as an inbuilt function which encodes all ‘object’ type columns in a pandas dataframe.

Post dummyfication, the data type of all object columns has changed. Not only that, the dummification has resulted in a separate column for each unique entry under each ‘object’ type column. So if we take Pclass as an example here, we now have 3 columns each for Pclass 1, 2 and 3 respectively. The data under all such columns will either be a 0 or a 1.

Let us move ahead towards splitting the data now.

The concept of Train, Validation and Test datasets

We were given the dataset in 2 parts, one was to train the model and the other was for actual prediction. This covers our train and test datasets respectively.

Now when we train our model how do we check its performance. We can not train the model using 100% of ‘train’ dataset and check the performance on the same. This will lead to data leakage (meaning the model already knows answers to the questions being asked)

Also, we can not check the performance using the ‘test’ data since we do not have the target variable values available for it to compare it with our prediction.

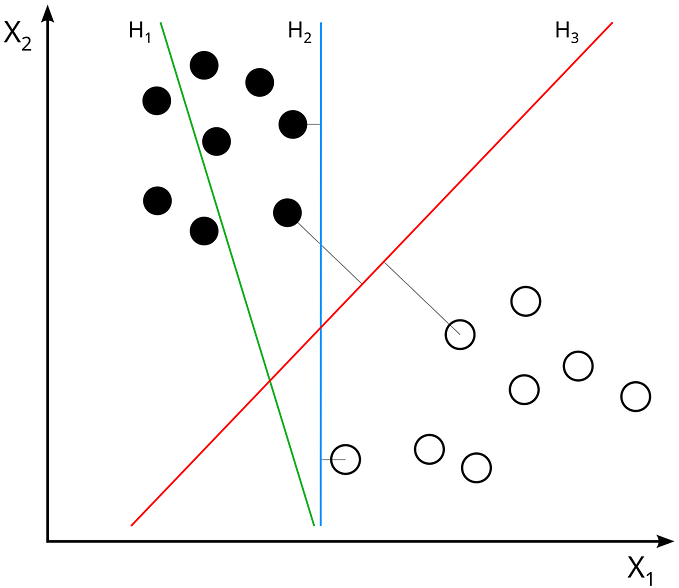

So we will have to carve a small portion out of our ‘train’ dataset and reserve it for checking purposes. We will not including it in our training and will call it the ‘validation’ dataset. Following is a pictorial description of the dataset we have and the way it is going to be split.

Dark Blue — Available data

Light Blue — Unavailable data

The horizontal yellow line is the split between ‘train’ and ‘test’ data already shared with us. Observe how we have no target values available for the test data.

The small portion within the ‘train’ dataset highlighted on orange will taken out and called validation set. This will be used for performance evaluation and will be around 15% of the train dataset.

It is a common practice to call the target variable as ‘y’ and all other features as ‘X’.

We will obviously be using the encoded (dummyfied) data for splitting.

You must be wondering why have we not created the X_val and y_val datasets which were mentioned earlier. Well, we will create these and will be using the train_test_split method available under sklearn library.

Baseline Models

Since we have already established that this is going to be a classification problem, we will be importing only the classifiers from sklearn library along with all evaluation methods.

We have now initialized and stored the classifiers into a dictionary.

The process we are going to follow now will be

- Create validation data from the train data

- Feed the train data into the model

- Check the performance using validation data

- Store all evaluation scores in data frame for comparison

- Hyper-parameter tune the best baseline model

- Re-evaluate the the hyper-tuned model to check for improvements in score

- Feed the test data into the model for final prediction

Since we have multiple models and we might iterating through the process multiple times with various combinations of the parameters let us use pipelines. Pipelines are a way to create workflow for iterative procedures. This may seem a little counter-intuitive to a beginner so I will try to define some functions that will replace the functionality of pipelines but at the same time will allow us to see how data passes from one step to another and give us the final result.

Function 1

This function will take in X and y i.e. the feature variables and the target variable and will give us back 4 datasets which will be the feature and target for train and validation.

We had skipped creating any validation set earlier, so this function will create the same for us.

Function 2

This function performs following tasks:

- extracts the train and validation set using the function defined earlier

- fit the train set into the model

- generates predictions for validation as well as actual test set

- calculates the evaluation scores and feed them into the dataframe defined for the purpose

- plots AUC_ROC curve

- plots feature importance

- plots trees for tree based algorithms

Function 3

This function runs a loop over all the classifiers stored in a dictionary and feed them to the functions defined earlier

Let us run the manually defined pipelines and get the results

The output of this is too long to be pasted here, hence I would suggest going to GitHub repository and looking at the detailed output. But to summarize the output shows results for each classifier in following ways

The feature importance information can be used to check what features actually contributed in the prediction within the model. This can further be used to drop the no so useful columns to fine tune the model. Although we will be skipping the step to avoid making the process complex.

The other scores under the confusion matrix are are used for any classifier and each score has a different meaning. The details of this will lead us to a whole new topic of evaluation criterion for classification. I suggest you to go through the detailed explanations available on the internet on the same. We will be simply generating all scores and storing in our dataframe for reference.

Let us check the scores

We have the best AUC for XGBoost and best accuracy for Logistic Regression. However, as mentioned earlier, choosing an evaluation score is a totally different subject and we will not get into it. Our target here will be to understand the process of building machine learning models.

Now, we have the scores and predictions

We can now try to improve the scores, the process to be followed is called hyper-parameter tuning.

Hyper-parameter Tuning

This is a method where we specifically feed a set of parameter values while defining our classifier to improve the performance of the same. But how do we know which parameter values are the best? For this we use a method called grid search, where we define a range of values for each parameter and let the model decide by itself (based on certain evaluation criterion, AUC in our case) the best set of parameter values.

Lets look at how this is done using the example of Random Forest

We defined a range of values for each parameter and fed it into the RandomizedSearchCV method. CV stands for cross-validation , which means the method will check the performance and cross-validate for each combination to arrive at the best possible parameters.

Each classifier has a different set of parameters and can be tuned in a similar fashion.

Once this process is over we can find the best set of parameter by using best_estimator method, the output of which will be as follows

We will be re-defining our classifier with the exact same parameters

This is how we can get a hyperparameter tuned classifier.

The same process will be followed for all classifiers. All classifiers are then again fed into the manually defined functions (pipelines).

Let us check the output

The highlighted scores show significant improvement in XGBoost classifier after parameter tuning. We will hence use the predictions from this model.

For the sake of understanding I have included all predictions from all models and stored in a dataframe

I urge you to go into this dataframe and observe how predictions vary among the models.

This concludes our process for prediction for the Titanic dataset and best AUC score achieved by us was 90% through tuned XGBoost classifier.

I hope the long post proved useful and helped you in understanding the process better. There could have been other ways to solve this. There may have been many other better feature engineering or transformation logics which may have resulted even better scores. I urge you to go around on the internet and find those solutions too.

Data science is not just about building models for prediction. There are many business insights generated by amazing Exploratory Data Analysis techniques. The same could be done on this dataset. We skipped the part to keep our focus on model building.

Please let me know if I could have done any thing in a simpler or better way, always eager to take suggestions on improvement.

Will be posting more content in future. Happy learning to all!